Has it occurred to you that your business’s brand keyword is no longer appearing on Google Search Results? Chances are your business’s website may have been de-indexed from the Google Database.

Being de-indexed by Google means your brand keywords will no longer appear on the Search Results.

For instance, if the name of your business is X Company. If someone searches ‘X Company’, it will no longer appear on Google Search Results.

In this article, you will learn about the reasons for being de-indexed and some basic guidelines that should help you resolve the issues.

Reasons Why Your Brand Keywords Are Not Appearing On Google

1. Google Penalty

Your website may get penalized by Google due to several reasons such as,

1. Search Intent: Every person either looks up a particular question or a vital topic to find the most suitable source with credible information.

Before, Google focused on keywords, but that often swayed people towards results that were not relevant to their search query, so Google has geared up to provide people precisely what they are looking for by optimizing its database to understand better what users intend to find.

If your website is not designed correctly or does not have the right content that fulfills users’ search intent, your website is at risk of being de-indexed because Search Intent is now one of Google’s Top Most Priorities.

Now that you know what search intent is, it is time to learn the four types of search intent, which are as follows:

2. Content Quality: According to Google’s Terms and Policies, it highly prioritizes and emphasizes preserving its user’s Search Intent.

Google became the Number 1 Search Engine because its algorithm is the most effective in analyzing and showing quality content to its users.

Just like Google rewards good content by elevating their rank on its SERP, it also suppresses poor content by either burying them in its SERP or, in the worst-case scenario removing the website from its database.

So, how does Google justify poor-quality content?

Content which are –

i) Irrelevant – If Backlinks are created that redirect to content unrelated to the products or services your business offers, then the content is likely to be flagged irrelevant, which can bring down the website’s rank. A high number of irrelevant backlinks increases your spam score, which could even lead to a Google penalty.

ii) Duplicates – Google Search Engine algorithm is designed in a way that it can determine the integrity of each website. Now, if your website is flooded with multiple duplicate contents, it is most likely to get flagged.

iii) Automatically Generated – Automatically generated content may violate the user’s search intent. The content may be constructed so that the information may not make sense. This happens to manipulate the Search Engine to rank up higher. Google’s sophisticated algorithm is designed to detect such content.

iv) Keyword Stuffing – This is loading the website’s metadata, content, or backlink anchor texts with the mass amount of keywords to manipulate the Search Engine to rank higher. This is regarded as an unfair practice by Google Guidelines.

v) Sneaky Redirect – This is the act of redirecting the user to another website that violates their original intent. Hyperlinks or thumbnails that show specific content to the users but redirect to another site are typically sneaky redirects. It is a form of deception in which both humans and crawlers are misguided.

Technical Issues

Faults within the website itself may raise concern over its user experience, readability, loading speed & security, etc. More precisely, this represents the website’s infrastructure issues, which may have significant flaws.

A few Technical Issues that need to be addressed include-

- Robot.Txt – This is a file that tells Google Crawler which web page should be indexed and which should not be. If you have accidentally blocked significant web pages, Google may not index your website. This can be a total accident or lack of technical know-how or expertise.

- Improper Site Mapping: If your website is not effectively site-mapped, users may not be able to navigate through the website or quickly find what they are looking for. This may lead to a high bounce rate. As a result, Google may perceive or understand that your website is not worth indexing. Hence, it may get de-indexed.

- Silo Structure: Ideally, your website is appropriately organized. Information or content is grouped so that users can find their desired content seamlessly in a matter of not more than three clicks. So, not maintaining a proper Silo Structure can get your website de-indexed.

Recommendation

1. Deep SEO Audit: As it is a macro issue, identifying the exact cause of the issue(s) concerned is the first step to resolving it.

Conducting a Deep SEO Audit in this regard is the safest option as it will provide you with all the information you need. It’ll help you construct the exact tactical approach and strategy to get your website re-indexed on Google Search results.

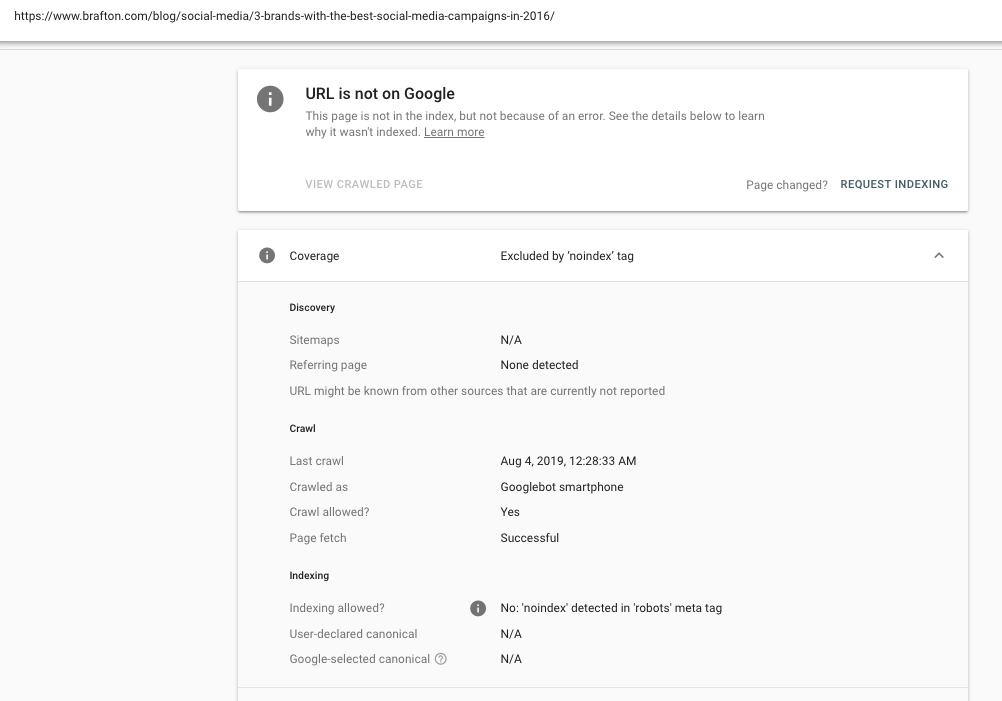

Primarily, you can check on Google Search Console. Just sign in, add the website URL and have your site verified. The overview page will show the indexing information of your website.

The first thing that you should check is whether you have somehow accidentally de-indexed your website. If so, the indexing page on Search Console will show an overview like this,

If you find, No: ‘noindex’ detected in the ‘robots’ meta tag. ‘This means that your website was de-indexed in the robot.txt file. In this circumstance, you need to edit your robot.txt file and get your website re-indexed. Or, you can use Google Search Console and robot.txt tester to fix the no index issue. Follow through the steps, and you should be able to resolve it.

Don’t worry. Google has made a guideline on editing the robot.txt file, but it is pretty technical. However, experts are always ready to help if you face technical difficulties.

2. Take Help from the Experts: In the direction of a famous saying, “desperate times call for desperate measures.” Given the severity of the situation, even a slight mistake will inevitably cost you a lot.

If you want to get your website re-indexed at the quickest time frame, the best decision would be to hire a marketing agency specialized in full-fledged Digital Marketing. In the long run, this investment will not only save your business’s online presence but will also contribute to its long-term growth.

Stressful times can cloud our judgment, leading to poor decisions making the situation worse. Opting for a Free SEO Consultation would be viable in this regard. This will help you get an overview of the situation, get an expert opinion, and take a prudent decision to mitigate the issue. On the contrary, if the consultation does not seem beneficial to you, there is no loss here, as you haven’t spent a dime for it.

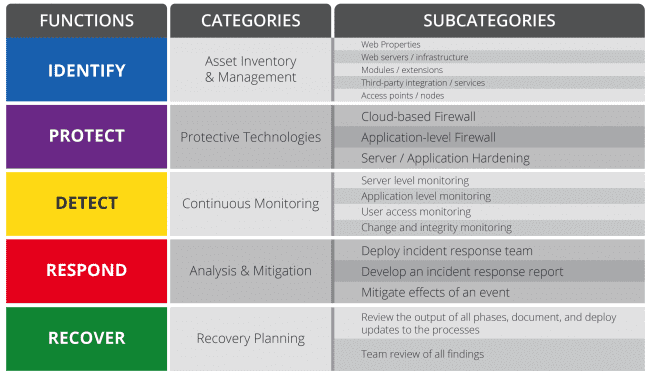

3. Boost Website Security – To protect your business from financial loss, unprecedented website shutdown, getting de-indexed from search engine databases, and your business’s reputation. Setting a high priority on website security is essential.

Imagine if your business’s database gets hacked, and all your vital information gets into the wrong hands; it can affect your business badly.

Simple steps on how you can protect and keep your website secure:

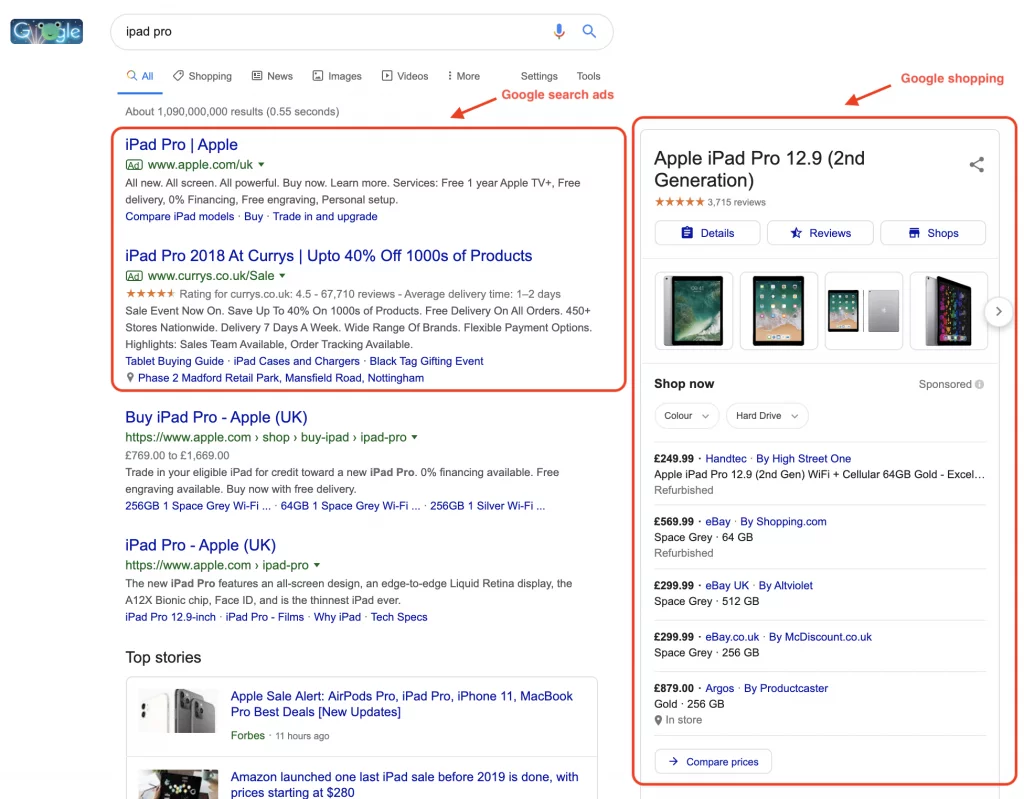

4. Take Advantage of PPC by Bidding Brand Keyword – Just relying on organic marketing efforts such as SEO and posting quality content isn’t enough. If you’ve noticed, results with the label ‘Ads’ appear on top SERP.

Google has optimized the sponsored search results to be so that it appears very similar to organic listings.

The purpose is to encourage people to click on the ads before noticing the organic search results.

Here’s an example:

So start bidding your brand keywords on Google Ads, and take control of your SERP.

According to research, it was found that PPC contributes in the following ways:

1. The annual cost of PPC ranges between $108,000 and $120,000.

2. Brand awareness can be increased by up to 80% through Google paid ads.

3. Paid advertising returns $2 for every $1 spent – a 200% ROI rate

4. 53% of paid clicks are made on mobile devices.

5. Protect your brand keywords with Bidding/PPC – Bidding brand keywords is not just a marketing strategy to boost website traffic from search engines. It is also a defense mechanism against competitors to prevent poaching your brand keywords.

You might be wondering how your competitor will bid on your brand keyword and is it even possible? Unfortunately for you, it is and what’s worse is it is not illegal either. According to Google Guidelines, it is explicitly stated that “We don’t investigate or restrict trademarks as keywords .”So, the only way here is to bid higher than your competitors. To learn how to protect your branded keywords from competitors, read this ‘How to protect Brand Names in Search Engines.’

Remember to Stay Positive

Every problem has a solution, no matter how complicated it is. However, it is imperative to stay persistent & resilient in this case. Getting your brand keywords back on Google Search results is possible with the right set of tactical approaches. Later, you can formulate strategies to bring your business to the top SERP.

Are you struggling to resolve the issue on your own? You can book a Free Consultation with our experts.